Autocompletion.io

- IT und Kommunikation

- Pre-Seed-Phase

- Plattform

- B2B

- 2020

-

1-10 Mitarbeiter

- Existiert

- Frankfurt a. Main, DE

- Login required

- Autocompletion.io Software UG

-

Hessen

Profil ist noch nicht übernommen

Dieses Profil wurde vom Startbase Analysten-Team erstellt. Wenn Du ein Teammitglied dieses Unternehmens bist, kannst Du das Profil übernehmen, um Änderungen daran vornehmen zu können.

Über Autocompletion.io

Our product is about automating everything that is obvious about programming. It will go new ways to make apps more save, trustable and editable by users. Companies that use our platform will become a big brain and a wet dream for ITIL managers.

Über das Team

Bist du Teil dieses Teams? Tillmann Karsten Vogt

Geschäftsführer

Tillmann Karsten Vogt

Geschäftsführer

Nachrichten über Autocompletion.io

Die neusten Erwähnungen von Autocompletion.io in Podcasts & Videos:

Offene Jobs bei Autocompletion.io:

Weitere Startups in der Nähe von Autocompletion.io

Startup

2021

Frankfurt a. Main

AGT Roboplant

Startup

2021

Frankfurt a. Main

AGT Roboplant

Startup

2010

Frankfurt a. Main

Neonga

Lizenzierung, Entwicklung, Betrieb und Vermarktung von Online-Spielen.

Startup

2010

Frankfurt a. Main

Neonga

Lizenzierung, Entwicklung, Betrieb und Vermarktung von Online-Spielen.

Startup

2018

Frankfurt a. Main

JL-Clean

JL-Clean ist ein Technologieunternehmen, das professionelle Heimtextilreiniger mit moderner IT-Infrastruktur verbindet.

Startup

2018

Frankfurt a. Main

JL-Clean

JL-Clean ist ein Technologieunternehmen, das professionelle Heimtextilreiniger mit moderner IT-Infrastruktur verbindet.

Startup

2021

Frankfurt a. Main

Education21

SaaS für ein digitales Schulsystem für Schüler von der fünften bis zur dreizehnten Klassen.

Startup

2021

Frankfurt a. Main

Education21

SaaS für ein digitales Schulsystem für Schüler von der fünften bis zur dreizehnten Klassen.

Startup

2015

Frankfurt a. Main

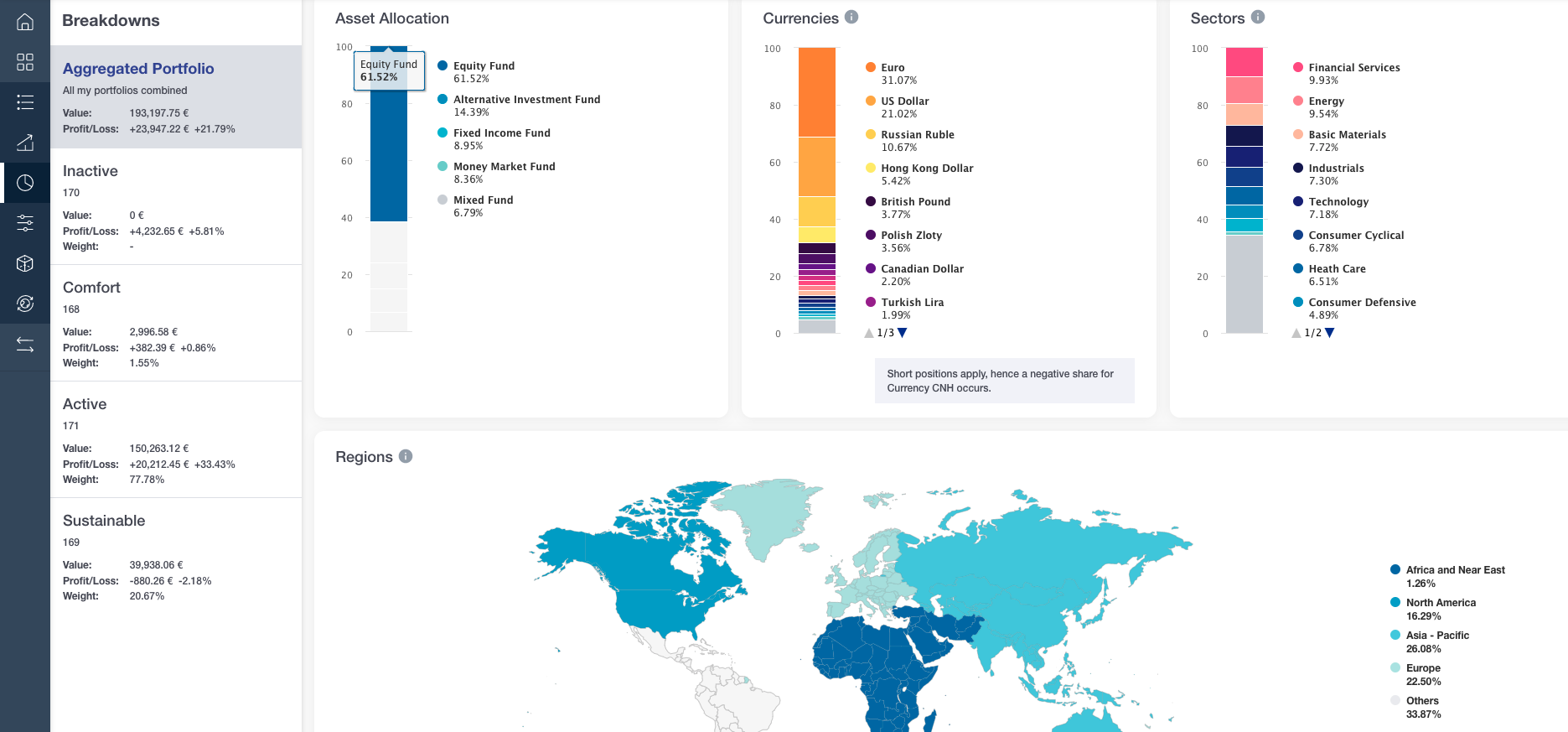

Fincite

Hybrid-Robo-Beratung, Portfolio-Analyse oder finanzielles Zuhause

Startup

2015

Frankfurt a. Main

Fincite

Hybrid-Robo-Beratung, Portfolio-Analyse oder finanzielles Zuhause

FYI: English edition available

Hello my friend, have you been stranded on the German edition of Startbase? At least your browser tells us, that you do not speak German - so maybe you would like to switch to the English edition instead?

FYI: Deutsche Edition verfügbar

Hallo mein Freund, du befindest dich auf der Englischen Edition der Startbase und laut deinem Browser sprichst du eigentlich auch Deutsch. Magst du die Sprache wechseln?